MariaDB & K8s: Deploy MariaDB and WordPress using Persistent Volumes

In the previous blog, MariaDB & K8s: Create a Secret and use it in MariaDB deployment, we used the Secrets resource to hide confidential root user data, and in the blog before that in the series, MariaDB & K8s: Communication between containers/Deployments, we created 2 containers (namely MariaDB and phpmyadmin) in a Pod. That kind of deployment didn’t have any persistent volumes.

In this blog we are going to create separate Deployments for MariaDB and WordPress applications as well as a Service for both in order to connect them. Additionally we will create Volume in a Pods of a MariaDB Deployment.

Configuration files

Following on from the latest blog, and the blog before we have created MariaDB Secret and MariaDB ConfigMap, and here we assume they exist in the cluster.

$ kubectl describe secret/mariadb-secret

Name: mariadb-secret

Namespace: default

Labels: <none>

Annotations: <none>

Type: Opaque

Data

====

mariadb-root-password: 6 bytes

$ kubectl describe cm mariadb-configmap

Name: mariadb-configmap

Namespace: default

Labels: <none>

Annotations: <none>

Data

====

database_url:

----

mariadb-internal-service

BinaryData

====

Events: <none>

Let’s add a configuration file for MariaDB Deployment and Service (GitHub file).

apiVersion: v1

kind: Service

metadata:

name: mariadb-internal-service

spec:

selector:

app: mariadb

ports:

- protocol: TCP

port: 3306

targetPort: 3306

clusterIP: None

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: mariadb-deployment

spec: # specification for deployment resource

replicas: 1

selector:

matchLabels:

app: mariadb

template: # blueprint for Pod

metadata:

labels:

app: mariadb # service will look for this label

spec: # specification for Pod

containers:

- name: mariadb

image: mariadb

ports:

- containerPort: 3306 #default one

env:

- name: MARIADB_ROOT_PASSWORD

valueFrom:

secretKeyRef:

name: mariadb-secret

key: mariadb-root-password

- name: MARIADB_DATABASE

value: wordpressThe Service created is a Headless type service, without a cluster IP allocated and without the need for load balancing. The only thing different from the previous blog is the created database “wordpress” during container startup, which is a requirement for the WordPress Deployment.

Let’s add a configuration file for the WordPress Deployment and Service (GitHub file).

apiVersion: v1

kind: Service

metadata:

name: wordpress

spec:

selector:

app: wordpress

ports:

- port: 80

targetPort: 80

protocol: TCP #default

nodePort: 31000

type: LoadBalancer

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: wordpress-deployment

spec: # specification for deployment resource

replicas: 1

selector:

matchLabels:

app: wordpress

template: # blueprint for Pod

metadata:

labels:

app: wordpress

spec: # specification for Pod

containers:

- name: wordpress

image: wordpress:latest

ports:

- containerPort: 80

env:

- name: WORDPRESS_DB_HOST

valueFrom:

configMapKeyRef:

name: mariadb-configmap

key: database_url

- name: WORDPRESS_DB_PASSWORD

valueFrom:

secretKeyRef:

name: mariadb-secret

key: mariadb-root-password

- name: WORDPRESS_DB_USER

value: root

- name: WORDPRESS_DEBUG

value: "1"The Service created is a LoadBalancer type (Minikube supports it) which balances the load of a Service. We are exposing a fixed node port to be used as an external port. This number should be in the range between 30000-32767. The Deployment created uses the information from ConfigMap for the environment variable WORDPRESS_DB_HOST, Secret from the environment variable WORDPRESS_DB_PASSWORD and reference container port 80.

Create resources and verify

$ kubectl apply -f mariadb-configmap.yaml

configmap/mariadb-configmap created

$ kubectl apply -f mariadb-secret.yaml

secret/mariadb-secret created

$ kubectl apply -f mariadb-deployment-pvc.yaml

service/mariadb-internal-service created

deployment.apps/mariadb-deployment created

$ kubectl apply -f wordpress-deployment-pvc.yaml

service/wordpress created

deployment.apps/wordpress-deployment created

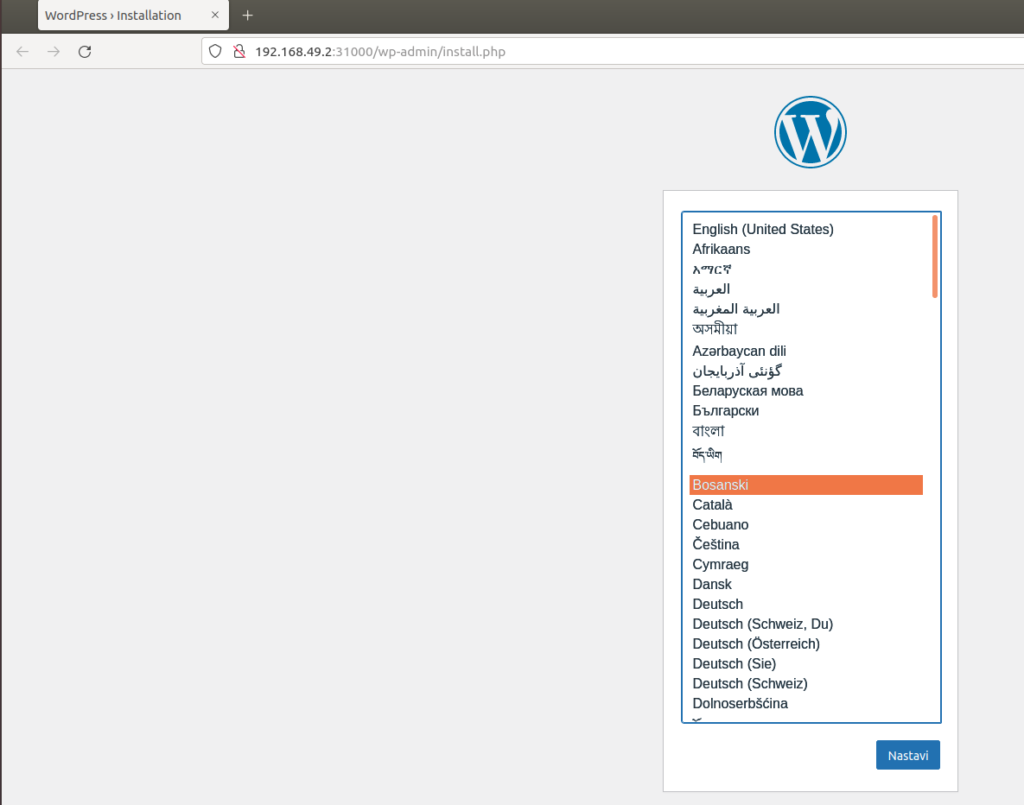

$ minikube service wordpress

|-----------|-----------|-------------|---------------------------|

| NAMESPACE | NAME | TARGET PORT | URL |

|-----------|-----------|-------------|---------------------------|

| default | wordpress | 80 | http://192.168.49.2:31000 |

|-----------|-----------|-------------|---------------------------|

🎉 Opening service default/wordpress in default browser...

After the last command we will get a URL with the possibility to install WordPress.

Add Persistent Volume

When Pod crashes, kubectl restarts the container and starts it from a clean state, which can be a problem for data consistency. In order to solve that problem, there is the Volume abstraction, related to the Pod. Pods can have multiple volume types, like the ephemeral volume type, that is default and tied to the life of a Pod, ConfigMap and the Secret type whose data can be consumed by files in a Pod, and, of interest for this blog, the PersistentVolumeClaim type, as a request to mount a PersistentVolume in a Pod.

A PersistentVolume (PV) resource is a piece of storage in the cluster that has been manually provisioned by an administrator, or dynamically provisioned by Kubernetes using a StorageClass (in the cluster there is a default StorageClass which uses the hostPath provisioner).

A PersistentVolumeClaim (PVC) is a request for storage by a user that can be fulfilled by a PV. Claim requests specific size and access modes.

PersistentVolumes and PersistentVolumeClaims are independent from Pod lifecycles and preserve data through restarting, rescheduling, and even deleting Pods.

To verify that, let’s add some data and restart the MariaDB Pod.

$ kubectl get pods -l app=mariadb

NAME READY STATUS RESTARTS AGE

mariadb-deployment-74f8c57cbf-t4whv 1/1 Running 0 73m

$ kubectl exec mariadb-deployment-74f8c57cbf-t4whv -- mariadb -uroot -psecret -e "create database if not exists mytest;use mytest; create table t(t int); insert into t values (1),(2); select * from t";

t

1

2

a

# Watch in first terminal state of Pods (MariaDB Pod will be restarted, wordpress Pod will be running)

$ kubectl get pods -w

NAME READY STATUS RESTARTS AGE

mariadb-deployment-74f8c57cbf-t4whv 1/1 Running 0 78m

wordpress-deployment-79697d4fd5-4qsn7 1/1 Running 0 78m

wordpress-deployment-79697d4fd5-s4gc9 1/1 Running 0 78m

mariadb-deployment-74f8c57cbf-t4whv 1/1 Terminating 0 79m

mariadb-deployment-74f8c57cbf-t4whv 0/1 Terminating 0 79m

mariadb-deployment-74f8c57cbf-t4whv 0/1 Terminating 0 79m

# Scaling deployment replicas to 0, will restart the Pod.

# Start command in the second terminal

$ kubectl scale deployment mariadb-deployment --replicas=0

deployment.apps/mariadb-deployment scaled

# Watch again MariaDB Pod creation before starting command to scale new replicas

# wordpress Pod is again in running state, not affected

$ kubectl get pods -w

NAME READY STATUS RESTARTS AGE

wordpress-deployment-79697d4fd5-4qsn7 1/1 Running 0 83m

wordpress-deployment-79697d4fd5-s4gc9 1/1 Running 0 83m

mariadb-deployment-74f8c57cbf-qd786 0/1 Pending 0 0s

mariadb-deployment-74f8c57cbf-qd786 0/1 Pending 0 0s

mariadb-deployment-74f8c57cbf-qd786 0/1 ContainerCreating 0 0s

mariadb-deployment-74f8c57cbf-qd786 1/1 Running 0 3s

# In other terminal

$ kubectl scale deployment mariadb-deployment --replicas=1

deployment.apps/mariadb-deployment scaled

# Check existence of already created database 'mytest'

$ kubectl exec svc/mariadb-internal-service -- mariadb -uroot -psecret -e "show databases"

Database

information_schema

mysql

performance_schema

sys

wordpressAs seen above, the old Pod is terminated and a new Pod is created when applying zero scaling of ReplicaSet, which is analogous to the Pod restart.

Before scaling with a single replica, it can be seen that only WordPress Pods are running, without MariaDB Pods, and after scaling to a single replica we obtained a MariaDB Pod, whose Status column shows the phases of Pod Lifecycle creation.

Let’s now change the script by adding PersistenceVolumeClaim and running the same scenario afterwards.

First let’s delete the old MariaDB Deployment:

$ kubectl delete -f mariadb-deployment-pvc.yaml

service "mariadb-internal-service" deleted

deployment.apps "mariadb-deployment" deletedMany cluster environments have a default StorageClass installed. When a StorageClass is not specified in the PersistentVolumeClaim, the cluster’s default StorageClass is used instead. We can check for the StorageClass:

$ kubectl get storageclass

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

standard (default) k8s.io/minikube-hostpath Delete Immediate false 145dWhen a PersistentVolumeClaim is created, a PersistentVolume is dynamically provisioned based on the StorageClass configuration.

Let’s add a PersistentVolumeClaim resource called “mariadb-pv-claim” with RW access and with resource storage of 300 MB that uses the default StorageClass (dynamic provisioning of Persistent Volume).

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: mariadb-pv-claim

labels:

app: mariadb

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 300MAdditionally we will update the container specification with the following

containers:

- name: mariadb

image: mariadb

ports:

- containerPort: 3306 #default one

env:

- name: MARIADB_ROOT_PASSWORD

valueFrom:

secretKeyRef:

name: mariadb-secret

key: mariadb-root-password

- name: MARIADB_DATABASE

value: wordpress

volumeMounts:

- name: mariadb-pv

mountPath: /var/lib/mysql

volumes:

- name: mariadb-pv

persistentVolumeClaim:

claimName: mariadb-pv-claimIn the container “mariadb” there is a mount called “mariadb-pv” for the default data directory path inside the container that will have storage size equal to the size of the claim, because the volume mount name “mariadb-pv” is specific to the “mariadb” container and is of the type persistentVolumeClaim, which is defined for the Pod and references the already created PersistentVolumeClaim resource “mariadb-pv-claim” in a cluster.

Now let’ apply this deployment, verify the results and try again to create the database in a Pod, restart the Pod and try to get old data.

$ kubectl apply -f mariadb-deployment-pvc.yaml

persistentvolumeclaim/mariadb-pv-claim created

service/mariadb-internal-service created

deployment.apps/mariadb-deployment created

# Get information about pvc resource - pvc is bound to the pv, check kubectl get pv

$ kubectl get pvc -l app=mariadb

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

mariadb-pv-claim Bound pvc-57467ba5-bf6e-4b91-8741-c6f24e9c5861 300M RWO standard 31s

# Get the pods

$ kubectl get pods -l app=mariadb

NAME READY STATUS RESTARTS AGE

mariadb-deployment-58c7c4d75c-h45nl 1/1 Running 0 100s

# Watch in first terminal

$ kubectl get pods -w -l app=mariadb

NAME READY STATUS RESTARTS AGE

mariadb-deployment-58c7c4d75c-h45nl 1/1 Running 0 3m53s

mariadb-deployment-58c7c4d75c-h45nl 1/1 Terminating 0 4m13s

mariadb-deployment-58c7c4d75c-lsfdw 0/1 Pending 0 0s

mariadb-deployment-58c7c4d75c-lsfdw 0/1 Pending 0 0s

mariadb-deployment-58c7c4d75c-lsfdw 0/1 ContainerCreating 0 0s

mariadb-deployment-58c7c4d75c-h45nl 0/1 Terminating 0 4m15s

mariadb-deployment-58c7c4d75c-h45nl 0/1 Terminating 0 4m15s

mariadb-deployment-58c7c4d75c-h45nl 0/1 Terminating 0 4m15s

mariadb-deployment-58c7c4d75c-lsfdw 1/1 Running 0 4s

# Execute command in the second terminal - note above that new Pod has been created

$ kubectl scale deploy mariadb-deployment --replicas=0 && kubectl scale deploy mariadb-deployment --replicas=1

deployment.apps/mariadb-deployment scaled

deployment.apps/mariadb-deployment scaled

# Verify results from newly created Pod by inspecting data created in the old Pod

$ kubectl exec mariadb-deployment-58c7c4d75c-lsfdw -- mariadb -uroot -psecret -e "show databases like '%test%'; use mytest; select * from t;"

Database (%test%)

mytest

t

1

2As can be seen we are able to get data that is resistant to Pod Restart/Termination.

We can have multiple PVC resources for a single Deployment. Try to create a PVC resource to WordPress Deployment for the volume mount path /var/www/html.

This way we have achieved data consistency and deployed the statefulset application.

Using Deployment is not recommended as a way to deploy statefulset applications since they are designed for stateless applications, so all replicas of a Deployment share the same PersistentVolumeClaim and only volumes with ReadOnlyMany or ReadWriteMany can work in this setting. Even Deployments with one replica using ReadWriteOnce volume are not recommended, since because of the default Deployment strategy, the second Pod will be created and deadlock may occur (more about this at this link).

Conclusion and future work

This blog showed how to create 2 Deployments and how to connect them via a Service. We also applied previously learned K8s concepts in order to exploit more of the K8s API.

In the database example, we showed the need for data consistency and the ephemeral nature of Pods, as well as introduced the PersistentVolumeClaim resource for dynamic provisioning on K8s clusters that helps in solving that kind of problem. However, this blog is just for demonstration purposes and learning the concepts. In the following blogs we will create a StatefulSet application, without Deployments, that is used to ensure data consistency.

You are welcome to chat about it on Zulip.

Read more

- Start MariaDB in K8s

- MariaDB & K8s: Communication between containers/Deployments

- MariaDB & K8s: Create a Secret and use it in MariaDB deployment

- MariaDB & K8s: Deploy MariaDB and WordPress using Persistent Volumes

- Create statefulset MariaDB application in K8s

- MariaDB replication using containers

- MariaDB & K8s: How to replicate MariaDB in K8s